ChamilleWhite/Getty Images

ChamilleWhite/Getty Images A major issue plaguing generative AI models is AI scraping, the process used by AI companies to train their AI models by capturing data from internet sources without the owners' permission. AI scraping can have an especially negative impact on visual artists, whose work is scraped to generate new art in text-to-image models. Now, however, there may be a solution.

Researchers at the University of Chicago have created Nightshade, a new tool that gives artists the ability to "poison" their digital art in order to prevent developers from training AI tools on their work.

Also: More fun with DALL-E 3 in ChatGPT: Can it design a T-shirt?

Using Nightshade, artists can make changes to the pixels in their art that are invisible to the human eye, but cause the generative AI model to break in "chaotic" and "unpredictable" ways, according to the MIT Technology Review, which got an exclusive preview of the research.

The prompt-specific attack causes generative AI models to render useless outputs due to manipulating the model's learning, which causes the model to confuse subjects for each other.

For example, it may learn that a dog is actually a cat, which would, in turn, cause the model to produce incorrect images that don't match the text prompt.

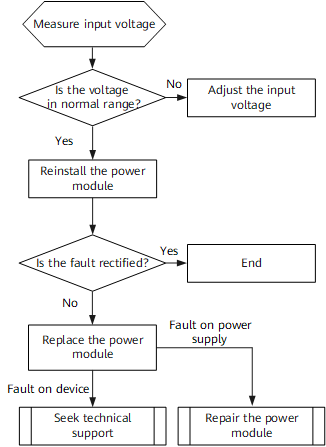

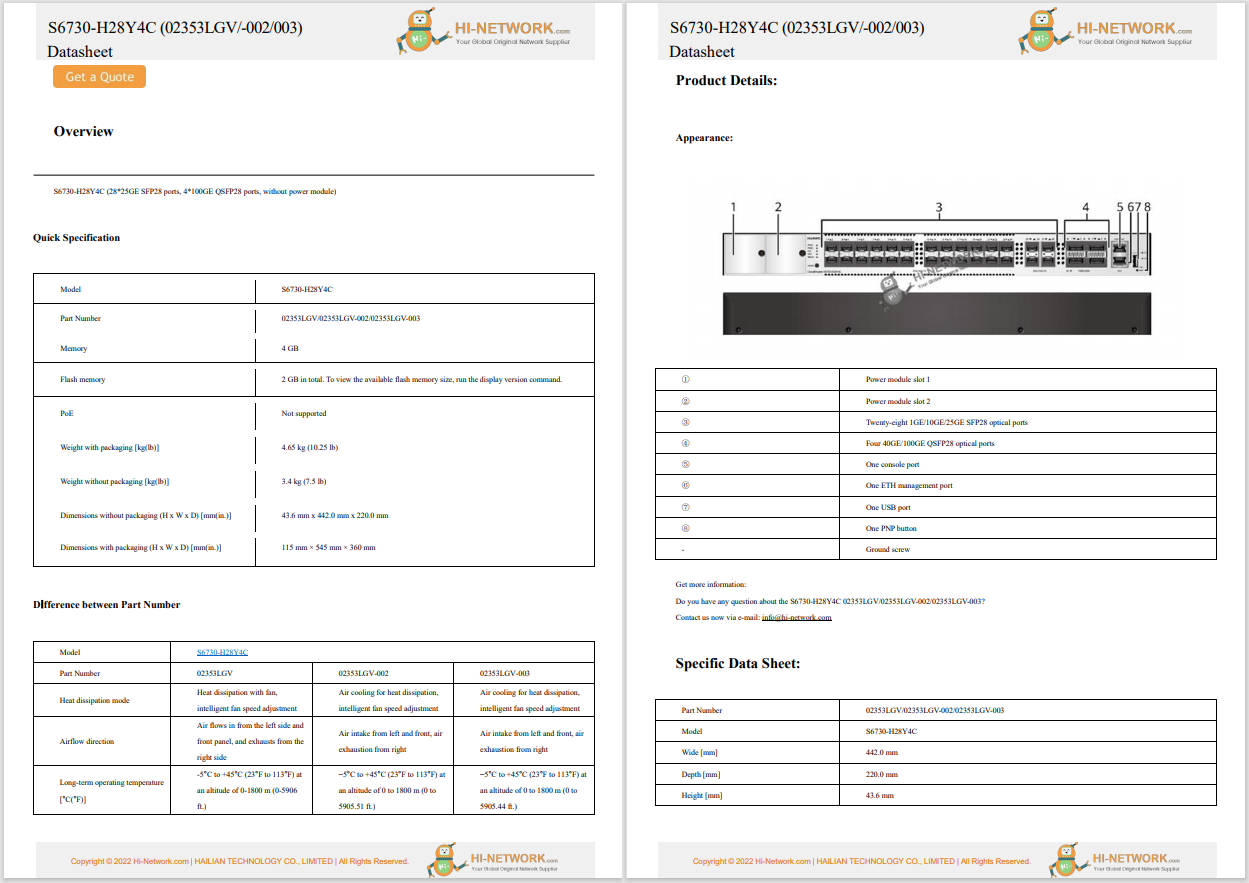

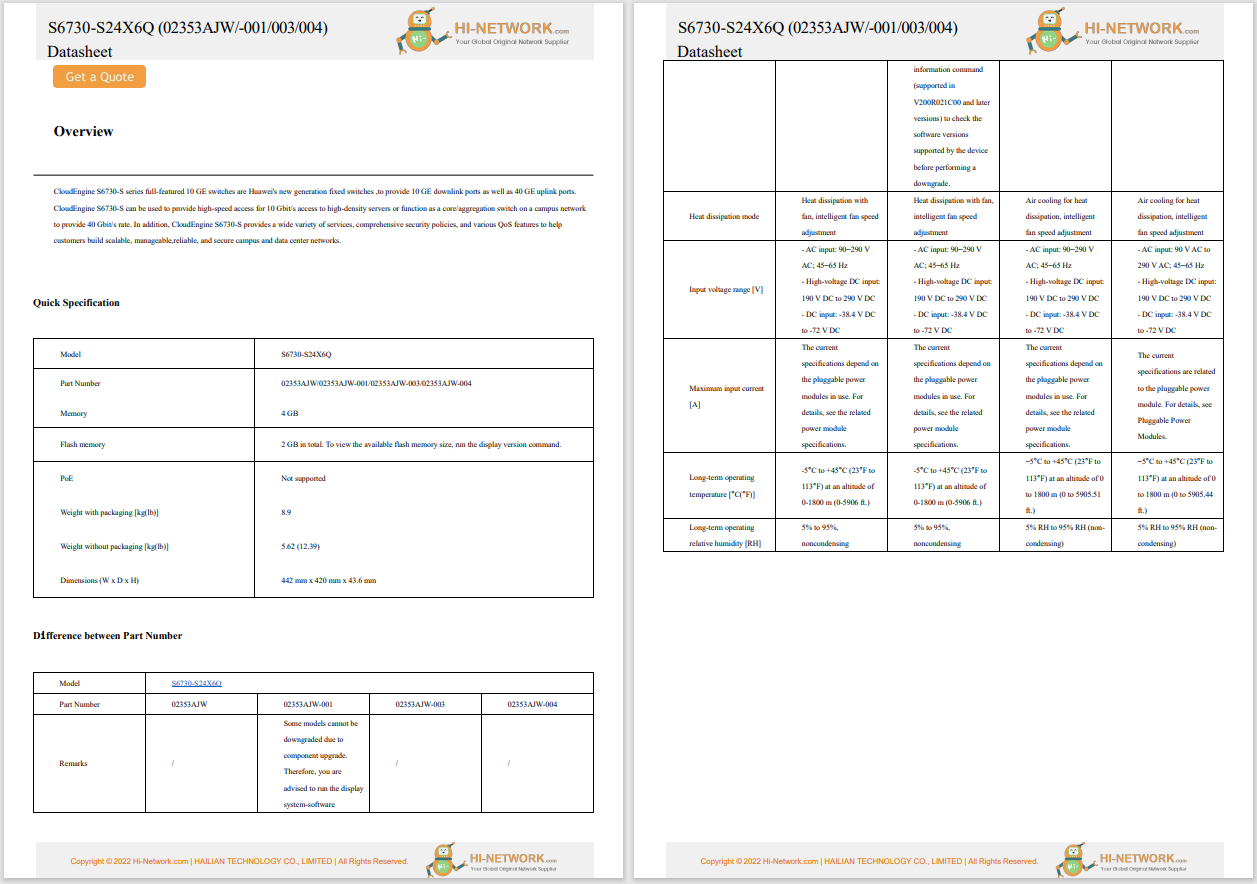

According to the research paper, Nightshade poison samples can corrupt a Stable Diffusion prompt in under 100 samples, as seen in the image below.

In addition to poisoning the specific term, the poisoning also bleeds through associated terms.

For example, in the example above, the term "dog" would not only be impacted but also synonyms like "puppy," "hound," and "husky," according to the research paper.

The correlation between the terms doesn't have to be as direct as the example above either. For example, the paper delineates how when the term "fantasy art" was poisoned, so was the related phrase "a painting by Michael Whelan," a well-known creator of fantasy art.

There are different potential defenses that the model trainers could deploy, including filtering high-loss data, position detection methods, and poison removal methods, but all three aren't entirely effective.

Also: Apple will soon bring AI to its devices, according to reports. Here's where

Furthermore, the poisoned data is complicated to remove from the model since the AI company would have to go in and individually delete each corrupted sample.

Nightshade not only has the potential to deter AI companies from using data without asking for permission but also encourages users to use precaution when employing any of these generative AI models.

Other attempts have been made to mitigate the issue of artists' work being used without their permission. Some AI image-generating models, such as Getty Images' image generator and Adobe Firefly, use only images that are approved by the artist or are open-sourced to train their models and have a compensation program in place in return.

Горячие метки:

Искусственный интеллект

3. Инновации

Горячие метки:

Искусственный интеллект

3. Инновации